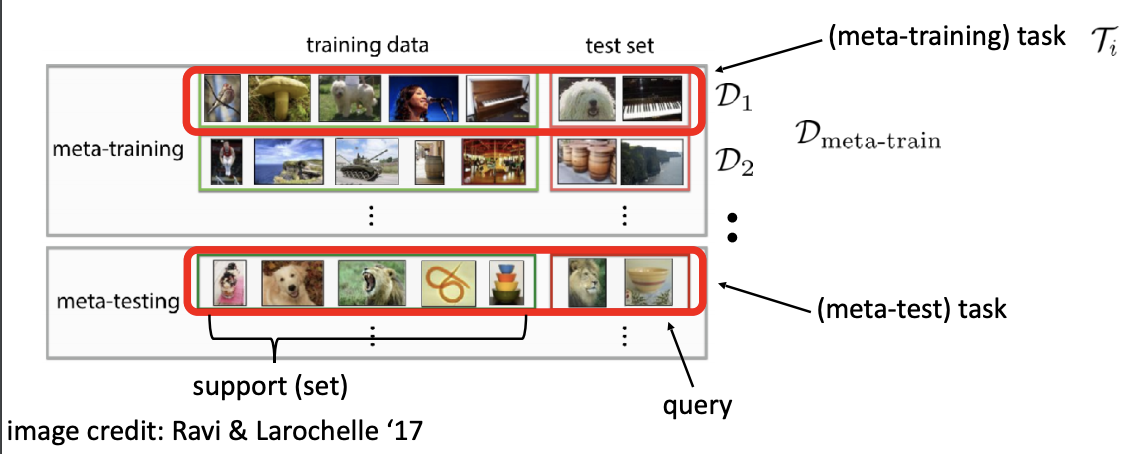

Finally, we explore how bootstrapping opens up new possibilities and find that it can meta-learn efficient exploration in an epsilon-greedy Q-learning agent - without backpropagating through the update rule. We achieve a new state-of-the art for model-free agents on the Atari ALE benchmark and demonstrate that it yields both performance and efficiency gains in multi-task meta-learning. Metric meta-learning is similar to few-shot learning in that just a few examples are used to train the network and have it learn the metric space. Meanwhile, the bootstrapping mechanism can extend the effective meta-learning horizon without requiring backpropagation through all updates. Metric based meta-learning is the utilization of neural networks to determine if a metric is being used effectively and if the network or networks are hitting the target metric. Focusing on meta-learning with gradients, we establish conditions that guarantee performance improvements and show that metric can be used to control meta-optimisation. The goal is to design models that can learn new skills/examples or quickly modify to new environments. The algorithm first bootstraps a target from the meta-learner, then optimises the meta-learner by minimising the distance to that target under a chosen (pseudo-)metric. Meta-learning is an exciting area of research that tackles the problem of learning to learn.

We propose an algorithm that tackles this problem by letting the meta-learner teach itself. Unlocking this potential involves overcoming a challenging meta-optimisation problem. Abstract: Meta-learning empowers artificial intelligence to increase its efficiency by learning how to learn.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed